Prompt and Circumstances

Evaluating the Efficacy of

Human Prompt Inference

in AI-Generated Art

Joseph Spracklen, Raveen Wijewickrama, Murtuza Jadliwala · UT San Antonio

Bimal Viswanath · Virginia Tech | Anindya Maiti · University of Oklahoma

The Prompt Economy

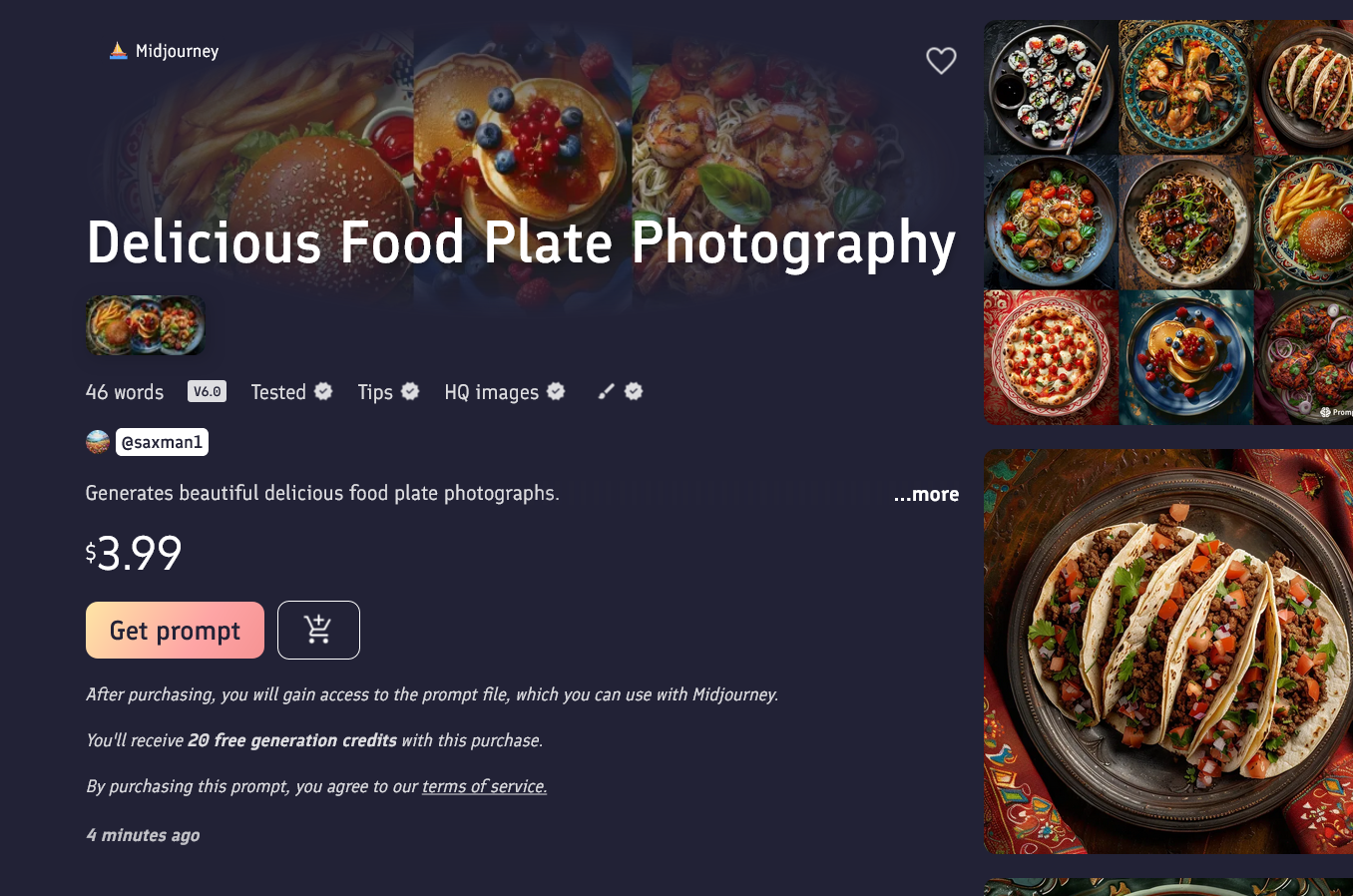

Prompt Marketplaces

Platforms like PromptBase, PromptHero, and CivitAI let creators buy, sell, and share text prompts for AI image generation.

Prompts as IP

Sellers assert intellectual property rights over their prompts, claiming them as proprietary creative assets. Platforms keep the actual prompt hidden until purchase.

The Vulnerability

Every marketplace listing shows sample images. Can someone reverse-engineer the secret prompt just by looking at those images?

What is Prompt Inference?

Prompt Anatomy

Prompts have a subject (what it depicts: cat, robot, astronaut…) and one or more modifiers (style, lighting, mood, color palette, medium…)

Even small modifier changes radically alter the image — capturing stylistic intent from visuals alone is deeply ambiguous.

Research Questions & Hypotheses

Study Overview

(campus + MTurk)

(100 controlled + 100 uncontrolled)

retained after filtering

Part I — Uncontrolled Dataset

100 real-world prompts sampled from PromptHero. Wide stylistic variety. Participants wrote free-form prompts describing five AI-generated images they were shown. Open-ended inference task.

Part II — Controlled Dataset

100 prompts built from scraped Lexica data. Fixed subjects (man, woman, astronaut, cat, robot) + 121 stylistic modifiers from Midjourney's keyword list. Ground truth known; enables direct comparison.

Datasets & Models

Four txt2img Models

- MidJourney v5 — closed-source, Discord interface; highly stylized

- Stable Diffusion XL (SDXL) — open-source transformer-based

- DreamShaper XL — SDXL fine-tune for photo-realistic fantasy imagery

- Realistic Vision v5 — SD 1.5 fine-tune for photo-realism

CFG Scale: 5 · Sampling Steps: 40 · Euler sampler

MidJourney used default model settings (parameters not user-controllable)

Participant Profile (n=230)

- Most aged 18–44; 59% male, 38% female, 2% other

- Moderate familiarity with generative AI: ~⅓ were only "slightly familiar" with image generation tools

- Participants saw 5 images each from controlled + uncontrolled sets and typed their best-guess prompts

Responses filtered: excluded entries missing subject or modifier, containing random/blank text, or non-English

Evaluation: Image Similarity Metrics

We measure inference quality by generating images from inferred prompts and comparing them to original-prompt images using three metrics:

ImageHash

Perceptual hash via Hamming distance. Score 0–64 (lower = more similar). Captures shallow visual cues.

LPIPS

Learned Perceptual Image Patch Similarity in deep feature space. Score 0–1 (lower = more similar). Reflects structure, texture, color composition.

CLIP Score

Cosine similarity of CLIP (ViT-L/14, ViT-B/32) embeddings. Score 0–1 (higher = more aligned). Measures semantic text–image alignment.

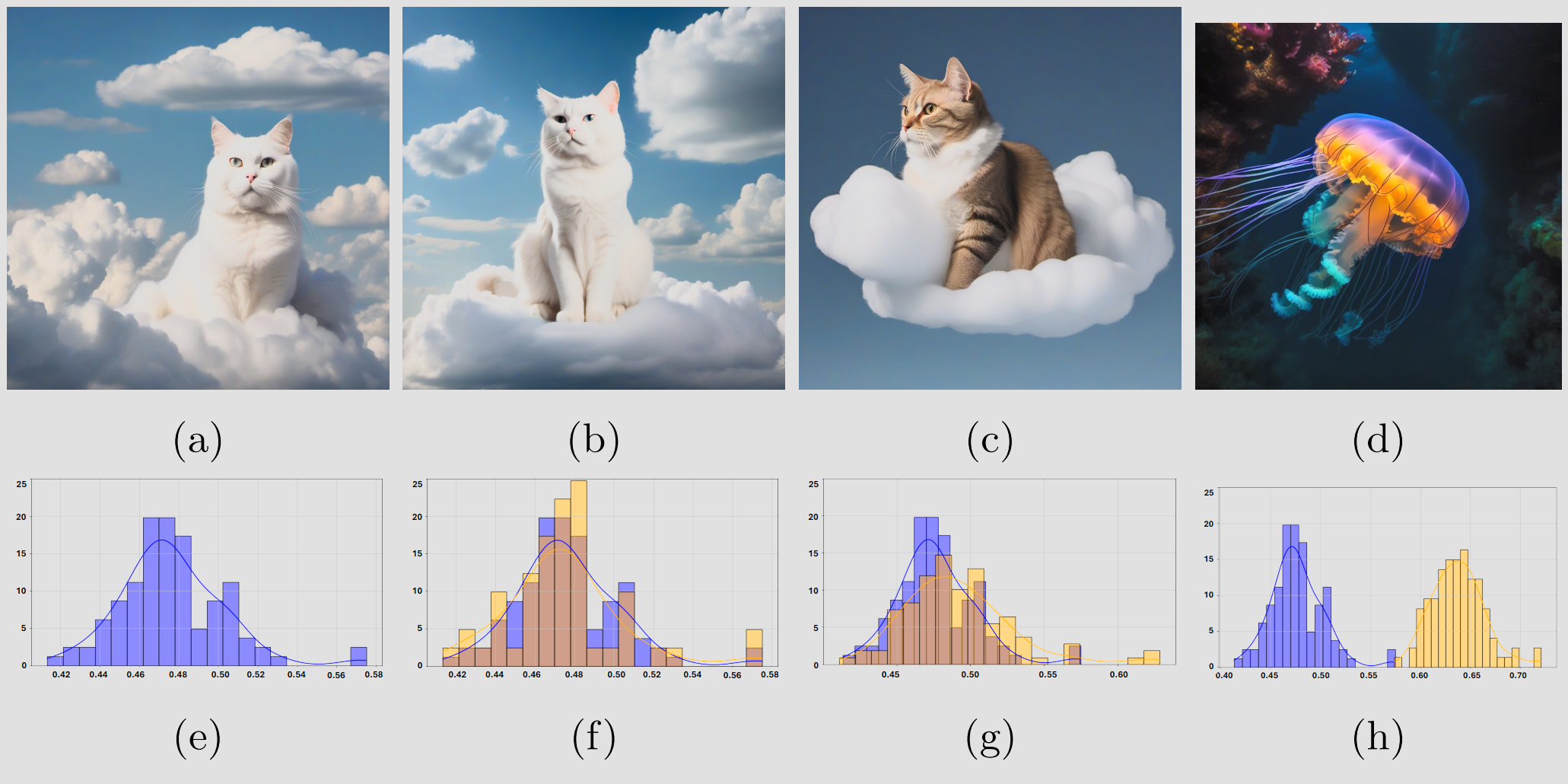

A Novel Evaluation Framework: Distribution-Level Inference

The Problem with Single-Image Comparison

txt2img models are stochastic — the same prompt produces different images each run. A single lucky match doesn't prove prompt equivalence.

Our Approach: Distributional Equivalence

For each prompt, generate 200 reference images (original prompt) and 50 inferred images (participant prompt). Treat each as a sample from a probability distribution.

Kolmogorov–Smirnov Test

Two-sample KS test checks whether the two similarity-score distributions are statistically indistinguishable (p > 0.05 = a "hit").

Fig. 6 — Same prompt (a,b) → overlapping distributions (f); different prompt (d) → diverging distribution (h). A hit = distributions are statistically indistinguishable (p > 0.05).

Human–AI Combined Prompt Inference

Example Merge

CLIP Interrogator: "a man in a steampunk suit and top hat standing in front of a giant clock with gears, Bastien L. Deharme, fantasy art"

GPT-4 output: "a man in a steampunk suit and top hat stands in a factory surrounded by gears and clocks, embodying a fantasy art portrait."

LLM merging was chosen over simple concatenation to preserve linguistic coherence, balance human and AI contributions, and avoid keyword repetition that could bias image generation.

Results — RQ1: Human Prompt Inference Performance

| Metric | Controlled Hit Rate | Uncontrolled Hit Rate |

|---|---|---|

| ImageHash | 53.29% | 53.72% |

| LPIPS | 22.89% | 9.77% |

| CLIP B32 | 7.28% | 7.56% |

| CLIP L14 | 7.05% | 7.21% |

LPIPS gap between controlled (22.89%) and uncontrolled (9.77%) confirms that open-ended prompts from the wild are far harder to reverse-engineer than structured laboratory prompts.

Results — RQ2: Linguistic Features & Model Effects

Linguistic Features (CLIP & LPIPS)

- Longer prompts only weakly correlate with higher hit rates

- CLIP mean correlates positively with hit rates

- LPIPS mean correlates negatively with hit rates

Model Choice Effects

DreamShaper XL — strongest positive relation with success (controlled r= +0.15).

Realistic Vision v5 — higher variability (controlled r= +0.14 for LPIPS variance), weaker inference success.

SDXL — largely neutral. Trends consistent across controlled and uncontrolled sets.

Results — RQ3: Does Human–AI Collaboration Help?

| Metric | Human Only | Human–AI Combined | Change |

|---|---|---|---|

| ImageHash | 53.29% | 60.62% | ↑ +7.3pp |

| LPIPS | 22.89% | 18.59% | ↓ −4.3pp |

| CLIP B32 | 7.28% | 5.89% | ↓ −1.4pp |

| CLIP L14 | 7.05% | 5.77% | ↓ −1.3pp |

ImageHash improvement (+7pp) reflects better pixel-level surface similarity, not meaningful perceptual alignment. This is a false positive — and further confirms why we exclude ImageHash from core analysis.

Discussion: Key Takeaways

What Humans Can Do

Broad subject matter is recoverable from images. Participants reliably identify the main theme, object, or scene — especially in controlled settings with common subjects.

What Remains Hard

Precise stylistic modifiers — lighting, texture, color palette, artistic medium, mood — are extremely difficult to reconstruct. CLIP hit rates of ~7% confirm this ceiling.

Metric Selection Matters

ImageHash inflates success by capturing only shallow pixel patterns. LPIPS and CLIP are more honest evaluators and reveal genuinely low inference success for unconstrained prompts.

Implications for Prompt IP

Results suggest that prompts are relatively resilient to reverse engineering under current human + AI inference techniques.

Human–AI Collaboration Gap

Effective human-AI co-creation for prompt inference remains unsolved. Future methods should optimize for LPIPS/CLIP alignment, not shallow surface similarity.

User Background Doesn't Matter Much

AI familiarity and arts background had limited impact on success rates — task and system-level constraints dominate over user expertise.

Conclusion

- Humans can infer what an image depicts, but not how it was styled — subjects yes, modifiers no

- LLM-augmented inference (CLIP Interrogator + GPT-4 merging) does not outperform human-only prompting on meaningful metrics

- Prompt-based IP appears relatively resilient to reverse engineering under current techniques

- Raises key questions for web platforms around content provenance, authorship, and secure prompt design

Q&A

Full Paper Pre-print

khoitrinh@ou.edu

EvoMUSART 2026